Michael Williams & gunshot detection alerts

Williams spent nearly a year in custody before prosecutors moved to dismiss for insufficient evidence. Coverage and litigation raised questions about the reliability and use of vendor alerts in that case.

- AP News reporting on dismissal (Aug 19, 2021)

- Chicago OIG analysis of ShotSpotter outcomes (Aug 24, 2021)

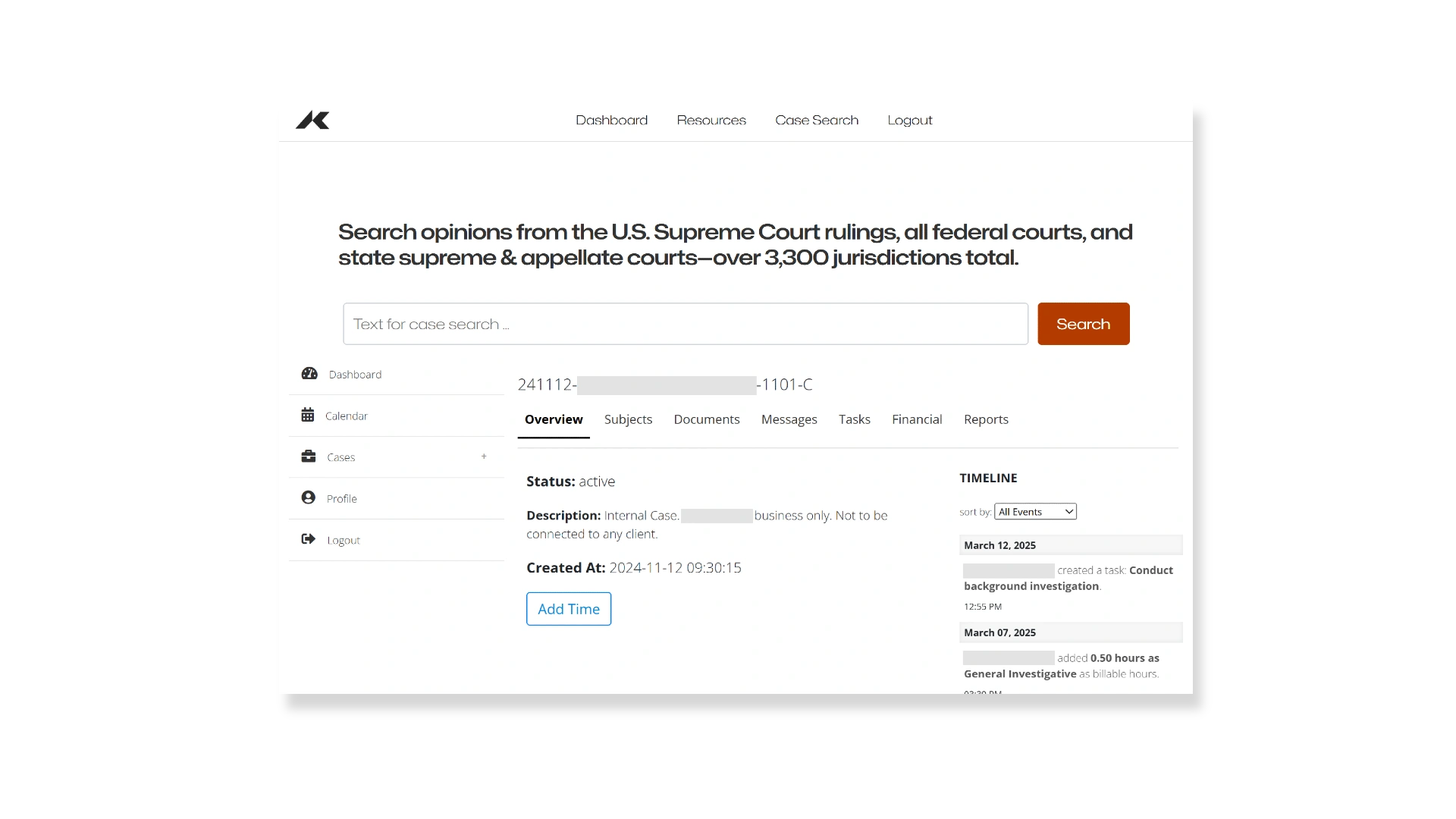

- Williams v. City of Chicago (case page)

Takeaway: treat alerts as leads, verify with orthogonal data, and quantify local confirmation rates.